Change Evaluation: The Seven R’s Revisited

The 2011 version of ITIL introduced the lesser known change evaluation process. It’s a great addition, and I haven’t seen a lot written about it.

The first thing to know is not every change requires a formal change evaluation. It’s intended to be used primarily for major changes, where the complexity and scope of the change warrants careful and formalized evaluation.

Change evaluation comes with its own Seven R’s – a handy same-letter list that’s easy to remember, and can help make sure we’ve explored the most common sources of issues with proposed changes.

Here’s the seven R’s:

- Who raised the request for change (RFC)?

- What’s the reason for the RFC?

- What return is the change expected to deliver?

- What risks does the change pose?

- What resources are necessary?

- Who is responsible for the key tasks?

- What’s the relationship between this and other requested changes?

The list is not intended to be a set of required fields on the RFC form; modern IT systems are far too complex. It’s intended to be used in the evaluation process to trigger deeper thinking about a proposed change and uncover potentially hidden sources of risk and unintended consequences.

A Great Start

The R’s are a great start, but we really need to do a little more serious detective work. Remember, change evaluation is reserved for major changes, where there’s often a great deal of complexity and high visibility.

Let’s put on our detective hats, and take a closer look.

Raised

Who raised the RFC is a good place to start. It goes well beyond who’s filling out the form or taking administrative responsibility for the RFC. Change evaluation needs to know the role and business function of the requestor.

If it’s IT, what functional unit? Why was it raised by this particular person or group?

If customer, does the requestor represent all user groups of the service? Has the requestor worked with relationship management and/or the service owner? Was it raised by a person who was directly impacted by a recent incident?

Understanding more about the role and context of the requestor helps change evaluation understand the bigger picture.

Again, these are supposed to get you thinking deeper about the proposed change.

Reason for the Change

An analogous question would be “What are you hoping to accomplish with this change?” This one can be tricky because you need to get to the question behind the question. Sometimes RFCs come on the heels of a significant incident. Some changes are proactive changes to avoid a potential incident or problem (such as keeping technology current).

Other changes are raised to address very specific business needs. Some reasons are urgent and pressing and others are related to longer term releases or system upgrades.

The question of what you hope to accomplish helps identify what specific outcomes are anticipated, so change evaluation can help determine if the RFC is likely to deliver them. This question should be answered in plain, unambiguous language that makes very clear the purpose of the proposed change.

In my experience, this question can unearth significant issues. In the evaluation process, it may be discovered that there are differences in understanding the reason behind a change. If the request isn’t clear, it’s harder for the change to be successful in meeting the desired outcome(s).

Return

This one tends to get forgotten because it’s hard to answer. ”Return” here is talking about business value. It too should be answered in plain language. An example would be: “RFC will deliver new order processing functionality that will reduce order processing time by 20%”.

The “return” should be specific and measurable, and from the perspective of the business. If it’s an infrastructure RFC, what risk or impact is it seeking to mitigate, and what’s the value of the avoided risk?

Risks

Identifying risks associated with change requests is critical for an effective change program. The risks here are not limited to technical and infrastructure risks – things like adding firewall rules may block application-required traffic.

When identifying risks, keep a business-impact focus in mind:

- What could happen that would have adverse impact on the business?

- Could the change impact other services running on the same infrastructure? Could there be unanticipated impact to business processes? Will the change increase the support load? Are there multiple changes happening at the same time, and are they compatible?

- Do the results from the test environment accurately predict what will happen in production?

- Will the changed service be able to meet its service level targets?

There are a number of formal risk management methodologies, such as ISO 31000 and Risk IT. If your company uses a formal methodology, that would be a good place to start.

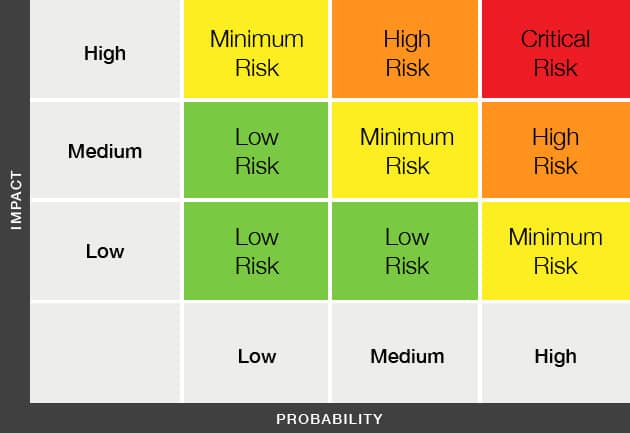

Otherwise, a simple and common approach is to create a risk matrix, where risks are identified and each rated on the probability of its occurrence, and impact, if it does.

With this simple matrix, it’s pretty easy to see that you should focus most of your attention on the critical and high risks, some on the minimum, and little on the low risks.

One thing to keep in mind is that all changes have some level of risk. It’s not helpful to either under or over state risks. Both can have disastrous impact.

Resources

This is where you identify all resources required for a successful change implementation. This includes everyone needed to build, test, implement, communicate, support, or roll back the change.

- Will the required resources be available during the development and implementation phases?

- Are other changes scheduled that require the same resources?

- Are the needed infrastructure and technical resources available (e.g. server and network capacity)?

- Is there sufficient infrastructure capacity to support the changed service? (How will you know?)

- Do all the players have what they need to be successful? (Will they be available immediately after the change is implemented?)

Responsible

Similar to resources, the “responsible” question seeks to ensure there are clearly established and understood roles and responsibilities. A RACI chart may be helpful (see What’s a RACI chart and how do I use it?). This is a critical step that helps eliminate assumptions and avoid misunderstandings.

Relationship

This is where we seek to understand how the change in question may impact or be impacted by other recent or planned changes. How do the service components fit together?

- Are there hidden dependencies to the components being changed? (What efforts have been made to identify?)

- Are there unknown users of a service that’s being changed or decommissioned? (How do we know?)

- Are there version dependencies between components, and have they been tested?

- Does the test environment accurately represent the production environment?

- Are critical parts of the infrastructure needed to implement the change that may be unavailable because of other changes?

Beyond the scope of this article, good configuration management and change modeling can be really helpful in understanding complex relationships.

Finding the Right Balance

Out in the real world, we all know some changes are more risky than others. It’s up to you to determine how deeply you need to go in evaluating a proposed change. If a change is large or complex or significant risk may be present, change evaluation is warranted. Changes that involve critical business functions or significant business process changes are excellent candidates for formal evaluation.

It’s been my experience that just when you think a change is benign, you get hit with something you didn’t think about, but maybe should have.

So, let me suggest a few additions of my own – reality, respect, results, and repeat:

Reality

This is where we take a quick reality-check. Yes, 12 Ninjas might drop from the ceiling and cause a large impact. But, in reality, how likely is it to happen? Have changes similar to the one under review been successfully implemented? Reality can be an important data point to help strike the right balance.

Data is a great reality check. Historic change information can be really helpful to help determine where additional evaluation is warranted and where it would be unnecessary. In reality, some change types, and components are just less problematic. Likewise, some that should be harmless no-brainers can cause big problems. Use reality as a guide.

Respect

The Change Advisory Board (CAB) is comprised of your best subject matter experts – those who have deep knowledge and are respected for their expertise and track record. Respect their experience and knowledge. When it comes down to it, change evaluation depends on people who are good at what they do.

Results

- Do the test plans adequately ensure the change will operate as intended?

- Has business functionality been tested? (Have the ‘intended result’ been validated?)

- Do the test results meet identified criteria for success?

- Were business users involved with testing? (Were any significant issues raised?)

- Has the business reviewed the test results?

Repeat

- Has the change being evaluated been done before? (Was it successful?)

- Is it a change that’s been attempted and failed? (Have the reasons for the failure been identified and addressed?)

- Have previous changes to the service components been successful?

- If it involves vendor-supplied updates, what’s the track record of prior updates?

Making It Happen

Remember to use the R’s as a reminder to explore all aspects of a change. Effective change evaluation is core to successful service delivery and business value. Use the seven R’s of change evaluation to make sure you’re covering all the bases. Also, take a look at the extra ones I’ve suggested.

How about you? What additions would you make? Do you have any that you’ve found helpful?

Did you find this interesting?Share it with others:

Did you find this interesting? Share it with others: