The Magical Mystery Tour of Metrics – The Real Power Inside ITSM Reporting

Back in my previous life, I would work occasionally with a mythical figure who could do magic—The Reporting Guy.

Here was a person who could pull all kinds of details from the ITSM solution and come up with the most incredible spreadsheets, pivot tables and slide decks imaginable.

There were colours, graphs, numbers that changed magically if you changed something else—all by the flick of a magic wand (or probably an Excel macro).

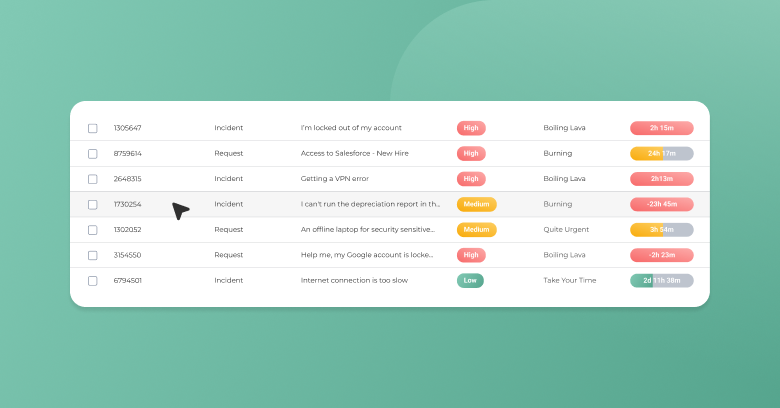

Of course there were the kinds of calculations you would expect to see in a normal ITSM solution, such as the numbers of incidents and service requests, including those resolved within their Service Level Agreement (SLA) time.

But the Reporting Guy had the ability to pull all kinds of facts and figures and manipulate them to do all kinds of things—a pied piper of spreadsheets, if you will.

Of course I am being perhaps a little facetious, because often in larger projects and deployments, teams work independently of each other and occasionally cross paths.

So while my erstwhile numerically-minded colleague could macro numbers to death, from my perspective at the time, so long as the tool captured that core data for it to be pulled out and acted on, then all was well.

It is only when you lift yourself away from just looking at the tool that you realise why Service Reporting deserves to be a process in itself, and needs some operational AND strategic consideration.

You come to realise that the ITSM Reporting Guy was just pulling out the bare bones of the data—sometimes the easiest things to manage, but not necessarily the right things.

Why is Reporting Important?

We measure to derive value, an understanding of efficiency (or otherwise), and to satisfy ourselves and stakeholders that we are doing the right things, and doing them well.

Everybody measures something, and most ITSM tools offer abilities to dashboard results so you can see at a glance what is open, closed, when, where, how, who—well you get the idea.

But an important concept to grasp is that we should measure performance in a meaningful way.

By that I do not mean a stick with which to clobber a team, but as a means of improving and increasing the efficiency of the teams that are providing a service.

So Give Me the Definitive List of Metrics…

This is often a common request on various forums—as if there is one standard list that fits every range of outcomes for every business, right off the bat.

Well of course, the ITIL books give those considered to be best practice at the end of the process sections, and they are called best practice for a reason.

It is as good a starting point as any, but it is just that.

As part of Continual Service Improvement, you should be reviewing that list and if you have developed other, maybe more sophisticated metrics, then move away from that starting point.

I Don’t Want to Have to Think About This, Just Gimme!

Sigh, tough!

You have to take a long hard look at the reasons why this information is important.

If we look at a Service Desk, and how efficiently they process Incidents and Requests coming in, they can use those figures to drive a variety of conversations with the business to improve their service.

But what about the business view?

The numbers only represent a small part of their sphere of interest in control.

How much is it costing the business for each request or incident?

Suddenly we are taking a broader look at the service end to end—not just whether an end user is satisfied they got their new smartphone in time, or their email service back.

Look at My Beautiful Slide Deck…Pretty Colours…

I am being facetious again, of course, but with a serious purpose—forget about facts, figures and numbers for a minute and consider this.

- If you had to stand up and explain all those pretty pictures in clear terms of positives and perhaps more importantly the negatives, could you?

- If you give a service owner or business stakeholder bad news, does anything constructive happen with those stats and reports?

- If you got hit by a bus and no-one was around to do the reports at the end of the month, would anyone notice?

When used properly, the results of your reporting feeds into the continuing lifecycle of your solution.

They need to be more than just a pretty deck, and key stakeholders need to be accountable if the pictures do not look as pretty.

And much like my point above, you cannot avoid having to attach some thought and rationale behind your reports.

For example, I looked over some figures recently where there was a jump in the numbers of incidents in one particular month compared to others, and a dramatic fall-away in others.

To explain this I have to look at the bigger picture – the client was an educational establishment where incidents would spike as a new intake of students came in to the premises, and maybe another spike after Christmas when students came back with new toys (laptops, smartphones) as presents.

The numbers would dwindle over the summer vacation period as most students would return home for the holidays.

Always look to the context behind the reports.

Food for Thought

Perhaps some other options to consider, as you build your pet list of metrics:

- Think about the overall goal the service and/or the business is trying to achieve and use that as the basis for the report.

- Next consider the operational factors around that goal and external influences around that operation.

- Then look at a number of measurements that will help you answer the questions, and move your organisation towards meeting that business goal.

Please share your thoughts in the comments or on Twitter, Google+, or Facebook where we are always listening.

Did you find this interesting?Share it with others:

Did you find this interesting? Share it with others: