Surveys: Pat On the Head, Or Beating Stick?

Every now and again, an all-too-rare thing happens to me these days—I get great customer service!

More often than not, bad customer service can leave us even more agitated than the situation we needed assistance with.

It makes me wonder—do we, as IT Service Management professionals, value excellent service more in spite of the fact we work in the field of Service Delivery, or because of it?

Customer service should matter in all walks of life.

Bafflingly, this is not always the case.

But do we ever bother to praise good service, or even respond to surveys?

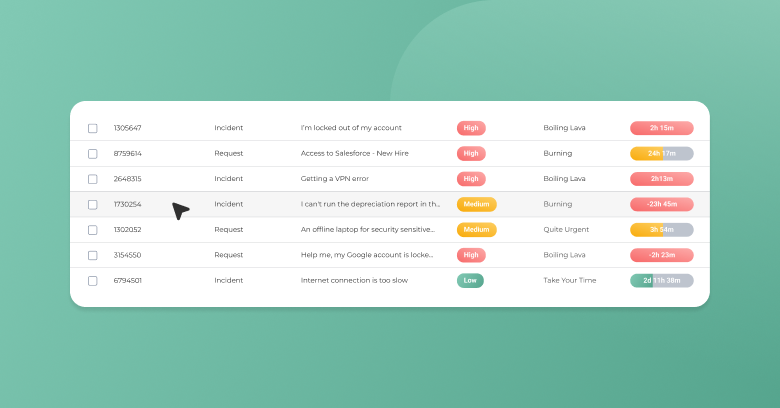

Although the ability to use an integrated survey function is integrated now into many tools, it is really not as simple as all that!

Less is More

Many organisations get the survey aspect totally wrong.

A prime example is my very own bank, which changed a perfectly good online banking scheme into a cartoon-iconed, effectively dumbed-down service that promptly crashed completely on its first day.

To add insult to injury, I was sent a survey, after having to resort to calling the telephone banking service after the umpteenth crash.

Making matters even worse, given my level of irritation, the survey went on for ever. There was an abundance of questions, and the longer the questions dragged on, the more cutting my responses became until I wrote a missive the size of War and Peace berating the whole system.

Did I ever get any feedback on my feedback – not surprisingly, no!

Why Do We Need to Do Surveys?

To paraphrase Mark Twain: There are lies, damned lies, and in the ITSM world, there are metrics!

But it is equally important to get a feeling for softer metrics of how the support structure is working. And the best people to provide an opinion on whether the service has been good is from the end users themselves.

Targeting Your End User

After-call survey

Please raise your hands if you’ve ever declined to stay on the phone for an after call survey. I know I have.

There is no denying that prompting a caller for an immediate survey means that the user experience is still recent in their minds (unless they have the memory recall of a goldfish!)

But I have always felt uncomfortable with this approach as it feels contrived and sometimes pressured, as quite often you are talking with the person reading out a script of questions about their service to you.

Telephone survey specifically targeted

As with the after-call survey, there is the element of getting an immediate response, and if it is targeted to a specific event or incident, then the feedback is much more focussed to a specific area of improvement.

The disadvantages, however, are that the call may well catch the user at an inopportune time, and perhaps they will be reluctant to provide a call-back time.

Interviews

Perhaps this is a great example of where the ITIL books give you an option of best practice, but either as a consumer of a specific support desk or even as a normal end user outside of wok, I have never had an interview regarding customer service!

The only circumstances I can see this even remotely working, is during the set-up of a brand new desk, and even then, in my own experience it has been largely done over the telephone.

Electronic surveys

This is by far the most common form of a survey that we see, but it always strikes me with a touch of irony that it is probably the most detached method of survey.

One of the most effective surveys was one sent to me by a company after I had to contact them for a replacement.

Why was it so effective?

It actually acknowledged that the customer’s time was precious and just asked ONE question.

That was it, just one question.

Of course, key to this approach is providing an option to add additional comments.

In this case, the customer service was prompt, speedy and very courteous, but the courier service left a lot to be desired.

The use of a single question is not a completely unique approach, though.

At the recent itSMF Service Desk and SLM Seminar in the UK, Greg Stonehouse (Nottingham Trent University) shared how the IT department had totally changed their approach to defining a service catalogue.

But as part of their implementation, they make the customer confirm the service call is resolved, and put in front of that a single question: Was the service good or bad?

Compare and contrast long-winded surveys with never-ending options and worse still, mandatory free text boxes that will not let you move until you have said your piece.

I can no longer count the surveys where I have given up halfway through, even if I have the chance of winning some piece of gadgetry!

Sounds Easy?

Greg Stonehouse made a point of saying that if they ever got a “bad” response, the service desk manager would be on the telephone immediately to understand why.

Indeed in a recent survey where I gave frank feedback about a company’s use of telephone after-service surveys, I was actively sought and contacted by a senior member of the customer support team. So it can work.

And there is more to surveys than just asking touchy-feely questions.

Proper use of surveys lends itself to a detailed knowledge of statistics and historical (and sometimes maybe even hysterical) reporting.

I will admit, I used to call one help desk the “Un-Help Desk” largely because of the stamina involved in a level one call.

Unless I had a good hour at hand at the very least, I would avoid calling them until my laptop had actually died, and I could guarantee a visit to a local site and hand it physically over to an engineer!

But looking back now, I would have skewed all the surveys, because I waited until there was no option but for local help, and thus had relatively good feedback, but probably at a huge loss of productivity.

My Top 5 Thoughts on Survey

- Go for between 1 and 5 questions—the fewer the better. Do not forget, this is a soft survey to help support more detailed metrics that look at much more than just the happiness of end users.

- If you are going to follow up (especially less-than-favourable feedback), do so with someone who is prepared to listen and, more importantly, able to outline what actions will be taken, and then follow up again.

- Teams and service management need to understand that the purpose of a survey is not to provide management with a beating-stick. At the heart of this should be Continual Service Improvement.

- Keep it simple. Just because tools can provide you with a dazzling array of options, you really do not have to use them all.

- Praise good service—I cannot stress this enough. Everyone loves a good moan, but how many people actually take the time to say they were satisfied or impressed with how their issue was solved.

There are no right or wrong answers here—businesses and organisations will try and garner as much information as they can to help improve (their perception of?) their service.

These are my thoughts, as an ITSM Deployment Architect and also as an end user and avid consumer.

What are your thoughts?

Please share your thoughts in the comments or on Twitter, Google+, or Facebook where we are always listening.

Did you find this interesting?Share it with others:

Did you find this interesting? Share it with others: